TL;DR: Product validation tests whether your solution solves a real problem before full development. This guide covers how to use AI-generated apps for rapid testing, which validation methods prove genuine demand (user interviews, usability testing, landing pages, fake doors, and cross-platform app testing), and the specific metrics and thresholds that signal when you have enough evidence to ship.

You've spent weeks imagining the app you want to build. You can see the interface, the workflows, the value it delivers. You feel ready — but there's a problem you haven't solved yet. How can you be sure anyone actually wants it?

Building the wrong thing wastes months and burns through budgets faster than you'd expect; CB Insights found 42% of startups fail because there is no market need. The old answer was to write specifications, hire developers, and hope for the best. The new answer — AI coding tools that generate prototypes in minutes — works brilliantly for initial testing. The problem comes after you're done validating. If you chose a tool that can't scale beyond prototyping, you'll need to rebuild everything on a production-ready platform, losing weeks of momentum and forcing you to rebuild validated features.

In this guide, you'll learn how to validate products using AI-generated apps you can build quickly, but also control and scale. You'll understand which validation methods prove real demand, which metrics matter, and when you have enough evidence to launch. By the end, you'll know exactly how to test your idea without wasting time on guesswork.

What is product validation?

Product validation is testing whether your solution solves a real problem for specific users before you invest in full development. This means proving three things:

- Your target users experience the problem you think they do

- They want your specific solution

- They'll actually use or pay for it

This differs from market validation, which only confirms that general demand exists. Market validation asks "is there opportunity?" — product validation asks "does my solution capture it?"

It also differs from product verification, which tests whether you built the technical specifications correctly. Validation comes first — you're testing desirability and willingness to engage before you commit resources to building a minimum viable product with every feature.

Think of validation as answering this question: "Will managers actually use this performance review tool?" Not "do performance reviews happen?" or "does the tool load in three seconds?" Those questions matter, but validation comes first.

Successful validation requires evidence, not opinions. A friend saying "I'd totally use that" doesn't count. A potential customer completing a task with your app in under three minutes does. The difference between validation and guessing is whether you can point to specific behavior that proves your hypothesis.

Why product validation matters in 2026

Validation reduces waste by catching wrong turns before code hardens into something expensive to change. Teams that validate early pivot faster, build features people actually adopt, and avoid the costly mistake of launching products nobody wants. When you skip validation, you discover problems after you've already paid for them — in engineering time, opportunity cost, and team morale.

The stakes got higher in 2026. AI makes building real apps incredibly fast — Sonar's 2025 survey found 42% of code is AI-assisted — which means you can build the wrong thing faster than ever before. What used to take weeks now takes hours, but speed without direction just means you're lost at a higher velocity. This makes validation more critical, not less.

Here's what validation protects you from:

- Wasted development time. Building features nobody uses burns months you can't get back.

- Budget drain. Every dollar spent on the wrong solution is a dollar you can't spend on the right one.

- Team frustration. Nothing kills morale faster than shipping work that gets ignored.

- Missed opportunities. While you're building the wrong thing, competitors are validating and shipping the right one.

Validated ideas also attract investment and align teams. Demonstrating product-market fit with real evidence turns skeptics into supporters, because investors back traction over vision and stakeholders trust evidence over enthusiasm. When you can show that 23 out of 25 test users completed the core task successfully, debates about whether to ship turn into conversations about how to scale.

The breakthrough in 2026 is combining AI generation speed with visual editing control on a platform that scales with you. You can generate a working prototype in minutes using Bubble's AI app generator, then chat with the AI Agent to add features or switch to visual editing when you want precise control. Then, when validation proves your idea works, you keep building on the same platform instead of rebuilding from scratch. This combination lets you validate faster without sacrificing the control you need to iterate based on what you learn and keeps momentum when you're ready to ship.

How to validate with AI-powered apps

Validation follows a systematic process that starts with defining what you're testing and ends with a decision based on evidence. Each step builds on the previous one, transforming vague ideas into testable hypotheses and converting user behavior into clear next steps. This isn't guesswork — it's a repeatable method for proving whether your product solves a real problem.

Define your target user and core hypothesis

Start with one specific user persona or job-to-be-done, not a broad market. Instead of "small business owners," focus on "solo HR managers at 50-person companies who manually track PTO in spreadsheets." The narrower your initial target, the clearer your validation signals become. You'll expand later — right now, you need specificity.

Write your hypothesis as a testable statement with clear success criteria. "Small business owners will complete invoice creation in under three minutes" beats "users will like the invoice feature" because one gives you a measurable threshold and the other just measures feelings. Good hypotheses predict behavior, not opinions.

Your hypothesis should answer three questions: Who is this for? What will they do? How will you know it worked? If you can't answer all three, you're not ready to test yet.

Generate and test your app

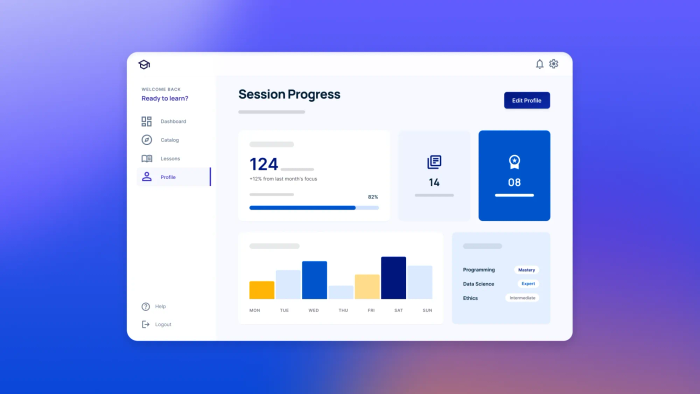

Use Bubble AI to generate complete web and native mobile apps that demonstrate your core value proposition. In minutes, you get working UI, visual workflows, database structure, and sample data that let users experience your solution as a real app, not just screenshots or code you can't edit.

On Bubble, you vibe code without the code — chat with the AI Agent when you want speed to generate interfaces, workflows, data types with automatic privacy rules, and dynamic expressions, or edit directly in the visual editor when you want precise control. The Agent builds in front of you so you always see exactly what changed, and you can seamlessly switch between AI and visual editing at any time.

Add realistic sample data and necessary integrations using Bubble's built-in API Connector (available even on the Free tier) to connect to external services. There are also 300+ Bubble plugins for enhanced functionality. When testing your prototype, users need enough context to use your app as they would in production. If you're building an invoice tool, include sample client names, amounts, and payment terms. If you're building a scheduling app, populate it with realistic appointments and conflicts.

Set up key events before anyone touches it. Track task completion, timing, and where people drop off. When you use the Agent to generate data types, privacy rules are automatically created to secure your data — Bubble builds security in from the start, not as an afterthought. This preparation takes an extra hour upfront but saves days of cleanup later.

Run user sessions and gather data

Recruit representative users — people who match your narrow persona and currently experience the problem you're solving. 5–8 users per segment reveals up to 85% of usability issues, but you'll need more for quantitative metrics. Pay them if you can; free testing often attracts people who don't actually experience your problem.

Focus sessions on task completion, not opinions. Ask users to complete specific jobs with your app while you observe:

- "Create an invoice for a client who owes you $500"

- "Schedule a meeting with three people who have conflicting availability"

- "Find last month's expense report and export it"

Watch where they hesitate, what they click first, and whether they complete the task without help. Capture both qualitative observations (frustration, confusion, delight) and quantitative metrics (time, clicks, success rate).

When collecting test user data, be aware that Bubble provides a GDPR-compliant Data Processing Addendum (DPA) covering EU data protection laws and US privacy laws like CCPA, with Standard Contractual Clauses for international transfers. Note that Bubble's terms prohibit submitting sensitive/restricted data to the platform.

Analyze results and make decisions

Look for patterns in both behavior and feedback. If 7 out of 8 users hesitate at the same step, that's a signal. If task completion takes four minutes when you hypothesized three, that matters. Separate blockers you can resolve (confusing labels, hidden buttons) from fundamental issues (nobody wants this feature, the value proposition doesn't resonate).

Decide whether to ship, iterate, or pivot based on evidence, not attachment to your original idea. Document your learnings for future validation cycles and your product roadmap. The analysis isn't complete until you've written down what you learned, which hypothesis was proven or disproven, and what you're changing as a result.

This documentation keeps teams aligned and prevents relitigating the same questions every sprint. When someone asks "why did we build it this way?" you can point to the validation evidence that drove the decision.

Validation methods that prove real demand

Different methods serve different purposes in the validation process. Some help you understand why users behave a certain way, others measure how many will actually convert. You need both qualitative methods (which reveal context and motivation) and quantitative methods (which confirm patterns at scale) to make confident decisions.

The methods below work particularly well with AI-generated apps because you can create realistic experiences quickly, measure real behavior, and iterate based on what you learn.

User interviews and usability testing

User interviews help you understand the problem space, current workarounds, and whether your value proposition resonates. Ask open-ended questions about their workflow, frustrations, and what they've tried before. "Walk me through how you handle time-off requests today" teaches you more than "would you use an automated PTO tracker?"

Listen for intensity — urgent problems get solved, mild annoyances get tolerated. When someone says "I spend 3 hours every Friday doing this and I hate it," that's a problem worth solving. When they say "yeah, that could be better," it probably isn't urgent enough to drive adoption.

Usability tests observe actual behavior with your app. Give users specific tasks and watch whether they complete them without moderator help:

- Can they find the feature without being told where to look?

- Do they understand what each button does?

- Can they complete the task in a reasonable time?

- Do they make errors or need to backtrack?

Success means they understood the interface, found the right actions, and achieved their goal independently. Failure means you need to redesign before investing more.

Landing page experiments and fake doors

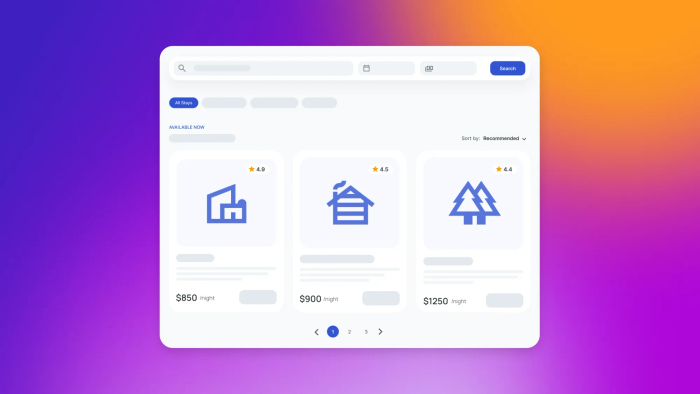

Landing pages test demand before you build full features. Drive qualified traffic to a page that describes your solution and measures meaningful actions — not just visits, but email signups, demo requests, or pre-orders. The conversion rate tells you whether people want what you're offering enough to take a concrete step toward getting it.

Fake door tests validate individual features by showing them in your interface before they work. When users click, explain the feature isn't built yet and ask if they'd like updates when it launches. Measure click-through rates and signup rates. If nobody clicks, that feature wasn't as compelling as you thought. If conversion is high, you've validated demand without building anything.

App testing across web and mobile

Test the same core flows on both web and native mobile platforms — Bubble lets you generate iOS and Android apps from the same editor with a shared backend, data, and workflows.

Measure consistent metrics across both platforms: task completion rate, time-on-task, and user activation. If web users complete the core task but mobile users drop off, you know the value works but the mobile interface needs iteration. Testing both platforms early prevents discovering usability problems after you've committed to a single approach.

| Best For | Key Metrics | Sample Size | |

|---|---|---|---|

| User Interviews 💬 | Understanding problem context and user motivations | Qualitative insights, pattern recognition in pain points | 5–8 per segment |

| Usability Testing 🎯 | Observing actual behavior with apps | Task completion rate, time-on-task, error rate | 5–8 per segment |

| Landing Pages 📄 | Testing demand before building features | Conversion to signup, cost per qualified lead | 100+ visitors minimum |

| Fake Door Tests 🚪 | Validating individual features quickly | Click-through rate, signup rate when unavailable | 50+ users exposed |

| App Testing 📱 | Validating web and native mobile experience from one platform | Activation rate, retention, cross-platform consistency | 25+ per platform |

| Mobile Testing 📲 | Confirming native mobile experience works | Mobile task completion, gesture recognition, platform-specific usability | 15+ per platform (iOS/Android) |

Metrics that signal when to move forward

Validation isn't complete until you've defined specific thresholds that determine whether you ship, iterate, or abandon an idea. Qualitative signals provide context and help you understand why users behave a certain way. Quantitative benchmarks give you numbers to compare against and thresholds that trigger decisions.

Qualitative success signals

Strong qualitative signals indicate users genuinely value your solution. When users ask to keep access after testing ends, you've solved a problem worth solving. When they explain the value back to you in their own words — not parroting your marketing copy — they understand what you've built and why it matters.

Watch for these behaviors during testing:

- Unprompted questions about pricing. "How much will this cost when it launches?"

- Timeline inquiries. "When can I actually start using this?"

- Integration questions. "Does this work with the tools I already use?"

- Replacement statements. "This would replace the three tools I use now."

These signal intent to adopt, not polite interest. Users who care about practical details are imagining themselves using your product in their actual workflow. Users who only offer general encouragement probably won't convert when launch day arrives.

Quantitative benchmarks to track

Task completion rates above 80% indicate your core experience works. Below that threshold suggests fundamental usability problems you must fix before launch. Time-on-task should match or beat current solutions — if your "simpler" tool takes twice as long as the manual process, you haven't delivered on your value proposition yet.

User activation within seven days shows whether people return after initial signup. Track what percentage of users complete your core action within that window. For meaningful validation, you need at least 25 users in a cohort to identify reliable patterns. Smaller samples work for finding obvious problems, but decision-making requires enough data to separate signal from noise.

Conversion rates from landing pages to meaningful actions vary by industry, but 5–10% is a reasonable benchmark for B2B tools targeting specific personas. Consumer products often convert lower but compensate with higher volume. Compare your numbers against similar products in your space, not against generic industry averages that might not reflect your context.

Moving from validation to launch

Validation isn't a one-time gate you pass through — it's a continuous practice that extends from initial concept through post-launch iteration. The systematic approach you've learned here applies whether you're testing your first app or your fiftieth feature. Start with a clear hypothesis, gather evidence through multiple methods, measure both behavior and motivation, and make decisions based on thresholds you defined before testing began.

With Bubble, you can seamlessly switch between AI and visual editing to iterate faster without losing control. When validation reveals problems, you can chat with the Agent for quick changes or edit directly in the visual editor for precise control — adjusting your app in hours instead of weeks. This speed matters because validation works best as a tight feedback loop — build, test, learn, adjust, repeat.

Remember that evidence-based decisions beat intuition every time. Your assumptions about what users want are hypotheses until proven with data. The users who complete tasks, convert on landing pages, and return to your product are telling you what actually matters. Listen to their behavior more than their opinions, measure outcomes more than feelings, and you'll ship products people genuinely want to use.

The path from idea to launch shortens dramatically when you validate continuously. You catch wrong turns early, iterate based on real feedback, and build confidence with every successful test. Start validating today — define your hypothesis, generate your app on Bubble, and put it in front of real users. The evidence you gather will guide every decision from here forward.

Frequently asked questions

How many test users do I need to validate my product idea reliably?

For usability testing, 5–8 users per key segment reveals most critical issues. For quantitative metrics like retention or task completion rates, aim for at least 25 users in a cohort to identify meaningful patterns that justify shipping.

What specific metrics prove my product idea is worth building?

Focus on task completion rates above 80%, user activation within seven days, and concrete demand signals like email signups or pre-orders from qualified prospects rather than social media engagement or general enthusiasm.

Can I validate my product's pricing before building the full version?

Yes, use landing pages with tiered pricing options, refundable deposits, or pilot contracts with opt-out clauses to test willingness to pay before full development. Track which price points generate the most qualified conversions.

How do I validate both web and mobile app versions efficiently?

Generate native iOS and Android apps alongside your web app using Bubble AI — all from one platform with a shared backend, data, and workflows. Test the same core tasks on both platforms and measure consistent metrics. Bubble's native mobile apps are built on React Native and share everything with your web app, so you build once and deploy everywhere. Mobile development is available on all paid plans with build submission limits (5–20 per month depending on tier), multiple live app versions, and unlimited over-the-air updates.

What's the difference between validating my product versus validating the market?

Market validation proves general demand exists in a market, while product validation confirms your specific solution solves the problem for your target users and they'll actually use or pay for it.

Build for as long as you want on the Free plan. Only upgrade when you're ready to launch.

Join Bubble